Prepare for Embedded Audio Interview Question with real-world questions on PCM, noise vs distortion, clipping, gain, loudness, latency, and ALSA fundamentals.

Embedded audio interviews are not just about definitions — they test how deeply you understand real-world audio behavior inside embedded systems.

Master Embedded Audio Interview Questions & Answers | Set 2 is carefully designed for engineers preparing for product-based companies, automotive audio roles, and RTOS/Linux/QNX audio positions.

This set focuses on practical audio fundamentals that interviewers frequently ask but candidates often struggle to explain clearly.

What You’ll Learn in This Set

This module dives into the core building blocks of audio systems, explained with clarity, real-life examples, and interview-ready logic:

- Difference between noise, distortion, and clipping — with real debugging insight

- How amplitude, loudness, and gain differ (a very common interview trap)

- What causes pop and click sounds in embedded audio systems

- How fade-in and fade-out prevent audio artifacts

- Understanding dynamic range and its relation to bit depth

- How to detect audio issues using oscilloscope waveforms and logs

- Real explanations aligned with ALSA, PCM, ADC/DAC, and RTOS audio pipelines

Each concept is explained the way senior engineers expect you to explain it in interviews, not like textbook theory.

Why This Set Is Important for Interviews

Most candidates can say definitions — very few can explain cause, effect, and solution.

Interviewers often ask:

- “Why do pops occur when audio starts?”

- “Is clipping noise or distortion?”

- “Why does low gain increase noise?”

- “How do you detect clipping in logs?”

This set prepares you to answer confidently, clearly, and technically, even under pressure.

Who Should Use This Set?

- Embedded Software Engineers

- Audio / Multimedia Engineers

- Linux & QNX Developers

- Automotive Infotainment Engineers

- Freshers preparing for embedded interviews

- Professionals revising audio fundamentals

Whether you’re targeting Qualcomm, Bosch, KPIT, Harman, Continental, or automotive OEMs, these questions are directly aligned with real interview expectations.

1.What is Jitter in Audio

One-Line Interview Answer

Jitter is the variation or instability in the timing of audio sample playback or capture, causing irregularities in the signal.

Step-by-Step Explanation

Timing Matters

- In digital audio, samples must be played or captured at precise intervals.

- Example: 48 kHz → 1 frame every 20.83 µs.

If this timing is not exact, audio gets distorted.

What is Jitter

- Jitter = small deviations in timing

- Occurs in:

- ADC (Analog-to-Digital Converter)

- DAC (Digital-to-Analog Converter)

- Clock signals

- DMA transfers

Effect:

- Audio may have clicks, pops, or slight pitch fluctuation

- Low-frequency jitter → subtle distortion

- High-frequency jitter → noise

Real-Life Analogy

Think of a metronome:

- Perfect beat → steady music

- Irregular beat → jitter → music feels off

In audio systems, jitter is like irregular beat intervals in digital playback.

Types of Jitter

| Type | Description |

|---|---|

| Random jitter | Unpredictable, caused by noise, clock instability |

| Deterministic jitter | Predictable pattern, caused by systematic issues like periodic interrupts |

Why Jitter Matters in Embedded / ALSA

- Audio in real-time systems is very sensitive

- Jitter causes:

- Undesired clicks or pops

- Phase errors in multi-channel audio

- Reduced audio quality

Example:

- Stereo I²S playback with variable clock → channels go out of sync → distortion

How to Reduce Jitter

- Use stable clock sources

- Buffering → absorbs small timing variations

- DMA transfers → reduce CPU-dependent timing errors

- High-quality PLL or crystal oscillators in audio ICs

Relation to Latency

- Latency = fixed delay between input and output

- Jitter = variation around that delay

Low latency system can still have high jitter if timing is unstable.

Interview Trap

1.“Does increasing buffer size reduce jitter?”

Yes, larger buffers can absorb small timing variations, but increase latency.

2.“Is jitter same as latency?”

No — jitter is variation in timing, latency is average delay.

ALSA / Embedded Example

Sample rate = 48 kHz

Expected frame interval = 20.83 µs

Actual frame intervals = 20.83 µs ± 2 µs

→ 2 µs deviation = jitter

Interview Summary

Jitter is the irregularity in the timing of audio sample capture or playback, causing noise or distortion. It’s different from latency, which is the fixed delay in the audio path.

Jitter vs Latency Comparison Table

| Feature | Latency | Jitter |

|---|---|---|

| Definition | Fixed delay between audio input/capture and output/playback | Variation or instability in timing of audio sample capture/playback |

| Nature | Deterministic | Non-deterministic (can be random or systematic) |

| Unit | Time (ms) | Time variation (µs or ms) |

| Effect on audio | Delay in hearing sound | Clicks, pops, distortion, pitch fluctuation |

| Measurement | Buffer size / sample rate | Frame interval variation (deviation from expected) |

| Cause | Buffer size, processing time, DMA transfer time | Clock instability, CPU interrupts, jittery DMA, PLL errors |

| Mitigation | Reduce buffer, optimize processing, increase sample rate | Stable clock, proper buffering, DMA, low-noise oscillator |

| Relation | Latency can be constant | Jitter is deviation around latency |

Key Interview Tip:

“Latency is the average delay; jitter is the variation around that delay.”

2.How to Measure Jitter in Embedded Audio Systems

Step 1: Understand the Expected Timing

- Determine frame interval based on sample rate and channel count

Sample rate = 48 kHz → Frame interval = 1 / 48000 ≈ 20.83 µs

Step 2: Capture Actual Timestamps

- Use high-resolution timers in your embedded system

- Record when each frame is processed or played

- Example in QNX / Linux:

struct timespec ts;

clock_gettime(CLOCK_MONOTONIC, &ts);

// store ts for each frame processed

Step 3: Compute Frame Interval Differences

- Calculate delta between consecutive frames:

delta = ts[n] - ts[n-1]

- Compare to expected frame interval

Expected = 20.83 µs

Actual = delta

Jitter = Actual - Expected

Step 4: Analyze Statistics

- Max jitter = worst-case deviation

- RMS jitter = root-mean-square deviation for typical behavior

- Histogram → shows distribution of timing deviations

Step 5: Optional: Use Oscilloscope / Logic Analyzer

- For I²S, TDM, or PDM audio lines

- Measure clock edges and data transitions

- Compare actual spacing with expected timing → hardware jitter

Embedded / ALSA Example

- ALSA driver callback provides period completion timestamps

- Measure variation between expected period intervals → jitter

- Formula:

Jitter (µs) = period_actual_time - period_expected_time

Interview Summary

Latency is the fixed delay in audio playback, while jitter is the small timing variations around that delay. In embedded systems, jitter is measured by comparing actual frame/period timing against the expected intervals, using high-resolution timers or hardware measurement.

3.How to Reduce Jitter and Latency in Embedded ALSA/QNX Audio Systems

Optimize Buffer Size

- Latency depends on buffer size:

Latency ≈ Buffer Size / Sample Rate

- Reduce buffer size → lower latency

Example: 2–4 periods instead of 8 - Trade-off: Too small → risk of underrun → pops/clicks → increases perceived jitter

- Rule of Thumb:

- Real-time audio: 10–20 ms total latency

- Playback: 50–150 ms acceptable

Reduce Period Size

- Period = number of frames before interrupt / callback

- Smaller period → more frequent processing → lower latency

- Larger period → fewer interrupts → reduces CPU load but adds delay

- ALSA Example:

aplay -D hw:0,0 --period-size=256 --buffer-size=1024 file.wav

- Period = 256 frames → faster callbacks → lower latency

Use High-Quality, Stable Clocks

- Jitter is mostly caused by clock instability

- Use:

- High-precision crystal oscillator

- Stable PLL for I²S/TDM interfaces

- Dedicated audio clocks if possible

- Avoid CPU software-based clocks for timing-sensitive audio

Use DMA for Audio Transfers

- CPU-driven audio → irregular timing → jitter

- DMA (Direct Memory Access) → transfer frames to DAC/ADC without CPU delay

- ALSA/QNX supports DMA-based drivers → very low jitter

- Example: I²S playback via DMA → period callback occurs precisely

Choose Appropriate Sample Rate

- Higher sample rate → shorter frame duration → reduces per-frame latency

- Typical choices:

- 48 kHz → embedded / video

- 96 kHz → professional DSP (more headroom, less aliasing)

Optimize CPU & IRQ Handling

- Audio interrupts must have high priority

- Avoid long ISR or blocking code

- QNX supports real-time thread priorities:

struct sched_param sp;

sp.sched_priority = 99; // highest

pthread_setschedparam(pthread_self(), SCHED_RR, &sp);

- Keeps period callbacks precise → reduces jitter

Use Interleaved vs Non-Interleaved Wisely

| Layout | Impact on Latency/Jitter |

|---|---|

| Interleaved | Simple DMA transfer, lower jitter |

| Non-Interleaved | Multiple buffers → potential DMA overhead → slightly higher jitter |

Embedded systems usually use interleaved for efficiency

Use Proper ALSA Parameters

- Configure ALSA driver with:

snd_pcm_hw_params_set_buffer_size_near()snd_pcm_hw_params_set_period_size_near()

- Choose power-of-2 sizes → DMA alignment → less jitter

Minimize Processing Overhead

- Heavy DSP in real-time thread → increases latency

- Offload to:

- Dedicated DSP cores

- Secondary threads with lower priority

- Keep main audio thread lightweight

Monitor and Tune in Real Time

- Check XRUNs (buffer underrun/overrun) → indicates latency/jitter issues

- Use ALSA API:

snd_pcm_avail_update(pcm_handle); // Frames available in buffer

- Measure timestamp deviations → detect jitter

Practical Embedded Example (QNX / ALSA)

// Set buffer and period

snd_pcm_hw_params_set_buffer_size_near(pcm_handle, &buffer_size); // e.g., 1024 frames

snd_pcm_hw_params_set_period_size_near(pcm_handle, &period_size); // e.g., 256 frames

// Set real-time priority

struct sched_param sp;

sp.sched_priority = 99;

pthread_setschedparam(pthread_self(), SCHED_RR, &sp);

- With stable clock + DMA + optimized buffer → latency ~10 ms, jitter < 1 µs

Summary Statement

To reduce jitter and latency in embedded ALSA/QNX systems: use small buffers and periods, stable clocks, DMA for transfers, interleaved audio format, real-time thread priority, and optimized DSP processing. Proper tuning avoids underruns and keeps audio precise and responsive.

4.What is Clipping in Audio

One-Line Interview Answer

Clipping occurs when the amplitude of an audio signal exceeds the maximum level that a system (ADC, DAC, or amplifier) can handle, resulting in distortion.

Step-by-Step Explanation

Why Clipping Happens

- Every audio system has a maximum voltage range:

- ADC/DAC: Limited by reference voltage

- Amplifier: Limited by supply rails

- If the audio signal exceeds this range, the peaks are “cut off” → clipped

Example:

ADC max = ±1V

Input signal = ±1.2V

→ Peaks above ±1V are clipped

How It Looks in a Waveform

- Normal signal: smooth sine wave

- Clipped signal: flat tops where the waveform exceeds the max

Normal: /‾\ /‾\

Clipped: ___ ___Types of Clipping

| Type | Description |

|---|---|

| Soft clipping | Peaks are rounded slightly → mild distortion |

| Hard clipping | Peaks are cut flat → harsh, unpleasant distortion |

Effects on Audio

- Adds harmonics → unpleasant sound

- Distorts voice or music

- Can damage speakers if extreme

Why Clipping is Important in Embedded Audio

- ADC / DAC in microcontrollers / SoCs has limited bit depth & voltage range

- Overshooting → digital clipping

- Amplifiers in embedded devices → analog clipping

How to Prevent Clipping

- Reduce input signal level → avoid exceeding ADC/DAC range

- Use automatic gain control (AGC) → keeps signal within limits

- Check bit depth → higher resolution reduces chance of quantization clipping

- Limit amplifier output → ensure it doesn’t exceed supply rails

Example in ALSA / PCM

- 16-bit PCM → amplitude range = ±32767

- Input signal > ±32767 → samples are clipped → distortion

int16_t sample = 40000; // exceeds 16-bit max

sample = 32767; // clipped

Interview Trap

1.“Does clipping increase volume?”

No — it distorts signal, doesn’t improve quality

2“Is clipping the same as saturation?”

Not exactly — saturation is mild, soft clipping; clipping is usually harsh

Interview Summary

Clipping is distortion caused when an audio signal exceeds the maximum amplitude a system can handle, resulting in flattened peaks. It can occur in ADCs, DACs, or amplifiers and is avoided by proper signal level management.

5.Difference Between Clipping and Distortion

| Feature | Clipping | Distortion |

|---|---|---|

| Definition | Occurs when audio amplitude exceeds system limits, flattening peaks | Any alteration of the original audio waveform that changes its shape |

| Cause | Excessive signal amplitude | Could be gain, filtering, compression, non-linear circuits, or clipping |

| Effect on waveform | Hard flat tops (hard clipping) or rounded peaks (soft clipping) | May include harmonic changes, phase shifts, or clipping |

| Intentional? | Usually unwanted | Sometimes intentional (guitar distortion, effects) |

| Example | ADC max exceeded → waveform peak flattened | Guitar amp overdrive, EQ effect, or amplifier nonlinearity |

Key Interview Tip:

All clipping is distortion, but not all distortion is clipping.

Digital vs Analog Clipping in Embedded Audio

| Feature | Digital Clipping | Analog Clipping |

|---|---|---|

| Where it occurs | ADC, DAC, or PCM sample exceeding bit depth | Amplifier exceeds voltage rails |

| Waveform appearance | Hard, abrupt flat tops (quantized) | Can be soft/rounded depending on circuit |

| Detection | Easy — compare sample to min/max value | Hard — requires oscilloscope or measurement |

| Repair | Cannot recover lost digital samples | Sometimes soft clipping can be filtered |

| Embedded relevance | ADC input clipping, PCM sample overflow | Amplifier output in microcontroller audio boards |

Example:

- 16-bit PCM max = ±32767 → sample 40000 → digital clipping

- Amplifier 3.3V rail → 4V input → analog clipping

How Clipping Relates to Bit Depth and Sample Rate

Bit Depth

- Defines max amplitude resolution

- Lower bit depth → smaller max value → more likely digital clipping

- Example: 8-bit PCM → max = 127, signal spikes above → clipped

Sample Rate

- Does NOT directly prevent clipping, but higher sample rate can capture peaks more accurately

- Low sample rate may miss short transients, creating artificial peaks when interpolated → perceived clipping

Practical Embedded Example

- 16-bit, 48 kHz PCM audio:

- Max sample = ±32767

- Input signal > ±32767 → clipped

- Lowering input gain or using AGC prevents clipping

Summary

- Clipping: waveform peaks exceed system limits → distortion

- Distortion: any waveform alteration (clipping is a subset)

- Digital vs Analog: digital = sample overflow, analog = voltage rails

- Bit depth: higher depth → less digital clipping

- Sample rate: higher rate → captures peaks better, but doesn’t prevent clipping

6.What Causes Noise in Audio?

One-Line Interview Answer

Audio noise is caused by unwanted electrical, digital, or environmental disturbances that get added to the original audio signal.

Main Causes of Noise (Big Picture)

Audio noise can come from three major areas:

1.Analog domain

2.Digital domain

3.System / environment

Analog Causes of Noise (Most Common)

Thermal Noise

- Comes from resistors, ICs, and amplifiers

- Increases with temperature

- Always present (cannot be eliminated)

Interview keyword: Johnson–Nyquist noise

Power Supply Noise

- Ripple or spikes on power rails

- Poor decoupling capacitors

- Switching regulators near audio paths

Common in embedded boards

Electromagnetic Interference (EMI)

- Nearby sources:

- Wi-Fi

- Bluetooth

- GSM

- Motors

- Switching SMPS

Result: humming, buzzing, clicks

Ground Loops

- Multiple ground paths with different potentials

- Creates hum (50/60 Hz)

Very common in audio hardware

Poor Analog Layout

- Long traces

- No ground plane

- Audio lines near high-speed digital signals

Digital Causes of Noise

Quantization Noise

- Due to limited bit depth

- More prominent at low bit depths (8-bit)

Higher bit depth → lower noise floor

Clock Jitter

- Timing variation in sampling clock

- Causes phase noise, especially in high-frequency audio

Common interview favorite

Buffer Underrun / Overrun

- CPU cannot feed audio fast enough

- Causes pops, clicks

Seen in ALSA / QNX systems

Improper Sample Rate Conversion

- Poor SRC algorithms

- Creates artifacts and noise

Environmental & System Causes

Microphone Self-Noise

- Mic electronics generate noise

- Cheap mics = higher noise floor

Acoustic Noise

- Wind, vibrations

- Mechanical noise near mic

Software Gain Mismanagement

- Excessive digital gain boosts noise floor

- Wrong AGC settings

Interview-Friendly Classification Table

| Noise Type | Cause |

|---|---|

| Hiss | Thermal, quantization noise |

| Hum | Ground loops, power ripple |

| Buzz | EMI interference |

| Click/Pop | Buffer underrun, clock issues |

| Crackle | Bad connectors, clipping |

Embedded Audio Example

Problem:

You hear a buzz when Wi-Fi is ON.

Cause:

EMI coupling into analog mic lines.

Fix:

- Shielding

- Proper grounding

- Differential inputs

- Filtering

7.Noise vs Distortion vs Clipping

One-Line Definitions

- Noise → Unwanted random signal added to audio

- Distortion → Change in shape of the original audio signal

- Clipping → Signal exceeds system limits and gets cut

All clipping is distortion, but not all distortion is clipping

Core Difference Table (Must-Remember)

| Aspect | Noise | Distortion | Clipping |

|---|---|---|---|

| Nature | Random | Deterministic | Severe, non-linear |

| Added or changed? | Added to signal | Signal shape altered | Peaks removed |

| Relation to signal | Independent | Depends on signal | Depends on amplitude |

| Predictable? | No | Yes | Yes |

| Intentional? | Never | Sometimes | Never |

| Example sound | Hiss, hum | Harsh, fuzzy | Crack, harsh cut |

Noise (Unwanted Addition)

What it is

- Extra signal added on top of audio

- Present even in silence

Examples

- Hiss

- Hum (50/60 Hz)

- EMI buzz

Embedded causes

- Thermal noise

- Power supply ripple

- Quantization noise

- EMI

Key line:

Noise lowers SNR but doesn’t change waveform shape.

Distortion (Waveform Change)

What it is

- Audio signal shape is altered

- Creates new harmonics

Examples

- Amplifier non-linearity

- EQ saturation

- Soft clipping

- Guitar overdrive (intentional)

Embedded causes

- Non-linear ADC/DAC

- Amplifier saturation

- Bad filtering

Key line:

Distortion changes the signal itself.

Clipping (Limit Exceeded)

What it is

- Signal amplitude exceeds system limits

- Peaks get cut flat

Types

- Digital clipping → ADC/DAC overflow

- Analog clipping → amplifier rail limit

Embedded example

- 16-bit PCM max = ±32767

- Input = 40000 → clipped

Key line:

Clipping is a severe form of distortion caused by overload.

Visual Memory Trick

Clean Signal:

/\ /\

Noise:

/\~~~/\~~~

Distortion:

/\/\__/\/\_

Clipping:

__|‾‾|__|‾‾|__

Interview Trap Questions

1.Is noise distortion?

No — noise is added, distortion modifies.

2.Can clipping occur without distortion?

No — clipping is distortion.

3.Does higher bit depth reduce noise or distortion?

Noise (quantization), not distortion.

Embedded Audio Summary Table

| Problem | Fix |

|---|---|

| Noise | Better grounding, higher bit depth |

| Distortion | Linear amplifiers, proper gain |

| Clipping | Reduce gain, increase headroom |

Interview Answer

Noise is unwanted random signal added to audio, distortion is any change in waveform shape, and clipping is distortion caused by exceeding system limits.

Ultimate One-Liner

Noise adds, distortion alters, clipping cuts.

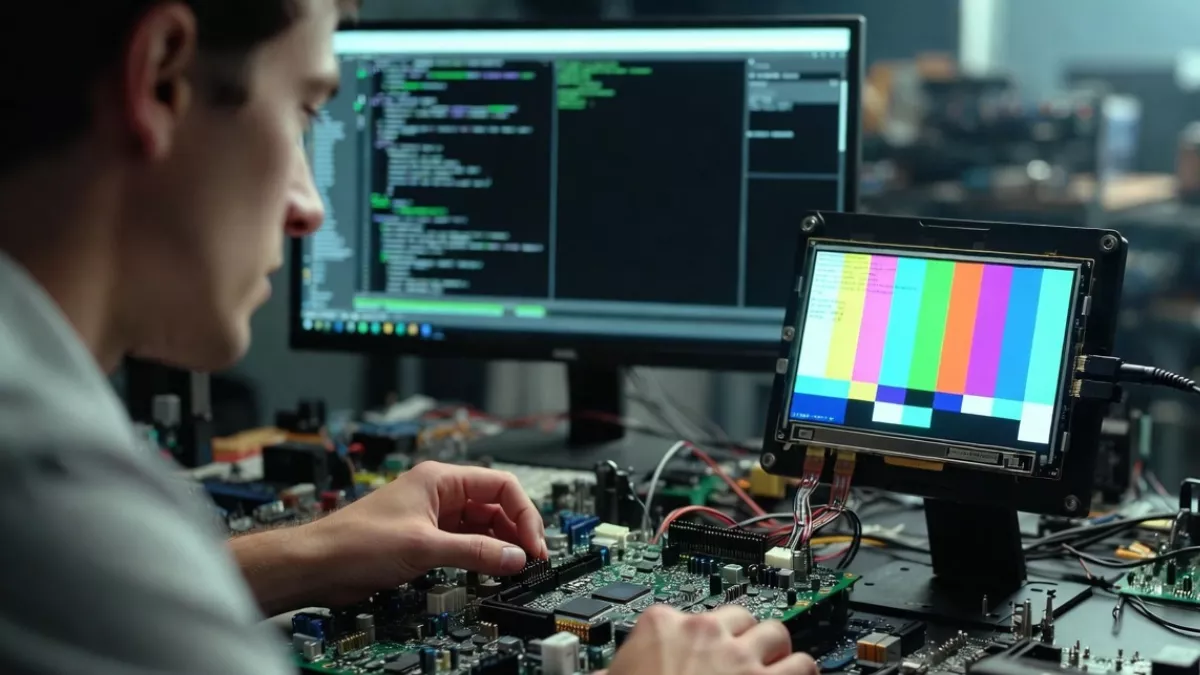

8.How to detect Noise vs Distortion vs Clipping on oscilloscope / logs ?

Noise

- Oscilloscope:

- Random small fluctuations on waveform

- Noise visible even when input is silence

- Logs / Software:

- High noise floor

- Low SNR readings

Distortion

- Oscilloscope:

- Waveform shape altered (no longer clean sine)

- Extra ripples or asymmetry

- Logs / Software:

- High THD (Total Harmonic Distortion)

Clipping

- Oscilloscope:

- Flat tops or bottoms on waveform peaks

- Logs / Software:

- Samples hitting max/min values

- Overflow or saturation warnings

One-Line Memory Trick

Noise = random fuzz, Distortion = shape change, Clipping = flat peaks

9.What is Dynamic Range ?

Dynamic range is the difference between the quietest and loudest sound an audio system can handle without noise or distortion.

Interview Keyword

- Measured in decibels (dB)

Simple Example

- Whisper → quietest sound

- Shout → loudest sound

The gap between them = dynamic range

Quick Tip

Higher bit depth → higher dynamic range

10.Explain Gain vs Volume ?

Gain

Gain controls the input signal level before processing or amplification.

- Affects signal strength

- Too much gain → clipping/distortion

- Used at mic, preamp, ADC input

Volume

Volume controls the output loudness sent to speakers or headphones.

- Affects listening level

- Does not change signal quality

- Used at DAC output, amplifier

One-Line Interview Trick

Gain sets how much signal you capture, volume sets how loud you play it.

Common Trap

Low gain + high volume = noisy audio

Increasing volume ≠ fixing low gain

11.What is Fade-In / Fade-Out ?

Fade-In

Gradually increases audio amplitude from silence to normal level.

- Prevents sudden start

- Avoids clicks/pops at beginning

Fade-Out

Gradually decreases audio amplitude from normal level to silence.

- Prevents abrupt stop

- Sounds smooth and natural

Why it’s used (Embedded / Audio Systems)

- Avoids clicks & pops

- Smooth start/stop of playback

- Used in media players, alerts, UI sounds

One-Line Interview Answer

Fade-in and fade-out smoothly ramp audio amplitude to avoid abrupt transitions and artifacts.

12.What Causes Pop and Click Sounds?

Main Causes

- Sudden amplitude change

- Start/stop audio abruptly

- No fade-in / fade-out

- DC offset

- Non-zero signal when playback starts/stops

- Buffer underrun / overrun

- Audio data not fed in time

- Sample rate / clock mismatch

- Clock drift or reconfiguration during playback

- Mute / unmute without ramp

- Hard mute toggling

- Power events

- Codec or amplifier power on/off

Detection

- Oscilloscope: sharp spikes at transitions

- Logs: underrun, xrun, timing warnings

One-Line Interview Answer

Pop and click sounds are caused by sudden signal changes, buffer underruns, DC offsets, or improper clock and power handling.

Quick Fixes

- Use fade-in / fade-out

- Apply mute ramping

- Ensure stable buffers & clocks

- Clear DC offset before start/stop

13.What is Loudness?

Loudness is how strong or loud a sound is perceived by the human ear, not just how big the signal is.

Key Points

- Perceptual → depends on human hearing

- Related to amplitude, but not the same

- Varies with frequency (ear is more sensitive to mid-frequencies)

Units

- Measured in LUFS (modern audio)

- Sometimes referenced in dB SPL (physical sound pressure)

Interview Trap

- Amplitude ≠ Loudness

- Same amplitude, different frequencies → different loudness

One-Line Interview Answer

Loudness is the perceived strength of sound as heard by humans, influenced by amplitude, frequency, and ear sensitivity.

14.What is Amplitude?

Amplitude is the height or strength of an audio signal that represents how much the air pressure (or electrical signal) varies from its rest position.

Key Points

- Represents signal strength

- In audio, higher amplitude → potentially louder sound

- Measured as:

- Voltage (analog)

- Sample value (digital PCM)

Important Distinction

- Amplitude is physical/electrical

- Loudness is perceptual (human hearing)

One-Line Interview Answer

Amplitude is the magnitude of an audio signal, indicating how strong the sound wave is.

Frequently Asked Questions (FAQ)

1.What is PCM audio in embedded systems?

Answer: PCM (Pulse Code Modulation) is the method of converting analog audio signals into digital samples so embedded systems can process, store, and transmit sound.

2.What is the difference between amplitude and loudness?

Answer: Amplitude is the signal strength (electrical/physical), while loudness is how humans perceive the sound. Higher amplitude usually increases loudness, but frequency also affects perception.

3.What causes pops and clicks in embedded audio?

Answer: Pops and clicks occur due to sudden signal changes, buffer underrun/overrun, DC offset, or clock instability during playback.

4.What is the difference between noise, distortion, and clipping?

Answer: Noise is unwanted random signal, distortion changes the waveform shape, and clipping is a type of distortion caused when the signal exceeds system limits.

5.How do gain and volume differ?

Answer: Gain controls input signal strength before processing, while volume controls output loudness to speakers or headphones.

6.What is dynamic range in audio systems?

Answer: Dynamic range is the difference between the quietest and loudest sound a system can handle without noise or distortion, usually measured in dB.

7.Why are pop and click sounds prevented using fade-in/fade-out?

Answer: Fade-in gradually increases amplitude at the start, and fade-out decreases amplitude at the end. This smooth ramp avoids abrupt transitions that cause pops and clicks.

8.How is audio latency different from jitter?

Answer: Latency is the fixed delay between input and output, whereas jitter is the small timing variation around that delay, which can cause clicks, pops, or phase errors.

9.What is clipping and how can it be prevented?

Answer: Clipping occurs when signal peaks exceed the system’s maximum level. It can be prevented by reducing input gain, increasing headroom, or using automatic gain control (AGC).

10.How do you detect noise, distortion, or clipping in embedded audio systems?

Answer:

- Noise: Random fluctuations visible on oscilloscope or high noise floor in logs

- Distortion: Altered waveform shape or high THD readings

- Clipping: Flat peaks in waveform or samples hitting max/min values

Read More: Embedded Audio Interview Questions & Answers | Set 1

Read More : Top Embedded Audio Questions You Must Master Before Any Interview

Read More : What is Audio and How Sound Works in Digital and Analog Systems

Read More : Digital Audio Interface Hardware

Read More : Advanced Linux Sound Architecture for Audio and MIDI on Linux

Read More : What is QNX Audio

Read more : Complete guide of ALSA

Read More : 50 Proven ALSA Interview Questions

Mr. Raj Kumar is a highly experienced Technical Content Engineer with 7 years of dedicated expertise in the intricate field of embedded systems. At Embedded Prep, Raj is at the forefront of creating and curating high-quality technical content designed to educate and empower aspiring and seasoned professionals in the embedded domain.

Throughout his career, Raj has honed a unique skill set that bridges the gap between deep technical understanding and effective communication. His work encompasses a wide range of educational materials, including in-depth tutorials, practical guides, course modules, and insightful articles focused on embedded hardware and software solutions. He possesses a strong grasp of embedded architectures, microcontrollers, real-time operating systems (RTOS), firmware development, and various communication protocols relevant to the embedded industry.

Raj is adept at collaborating closely with subject matter experts, engineers, and instructional designers to ensure the accuracy, completeness, and pedagogical effectiveness of the content. His meticulous attention to detail and commitment to clarity are instrumental in transforming complex embedded concepts into easily digestible and engaging learning experiences. At Embedded Prep, he plays a crucial role in building a robust knowledge base that helps learners master the complexities of embedded technologies.