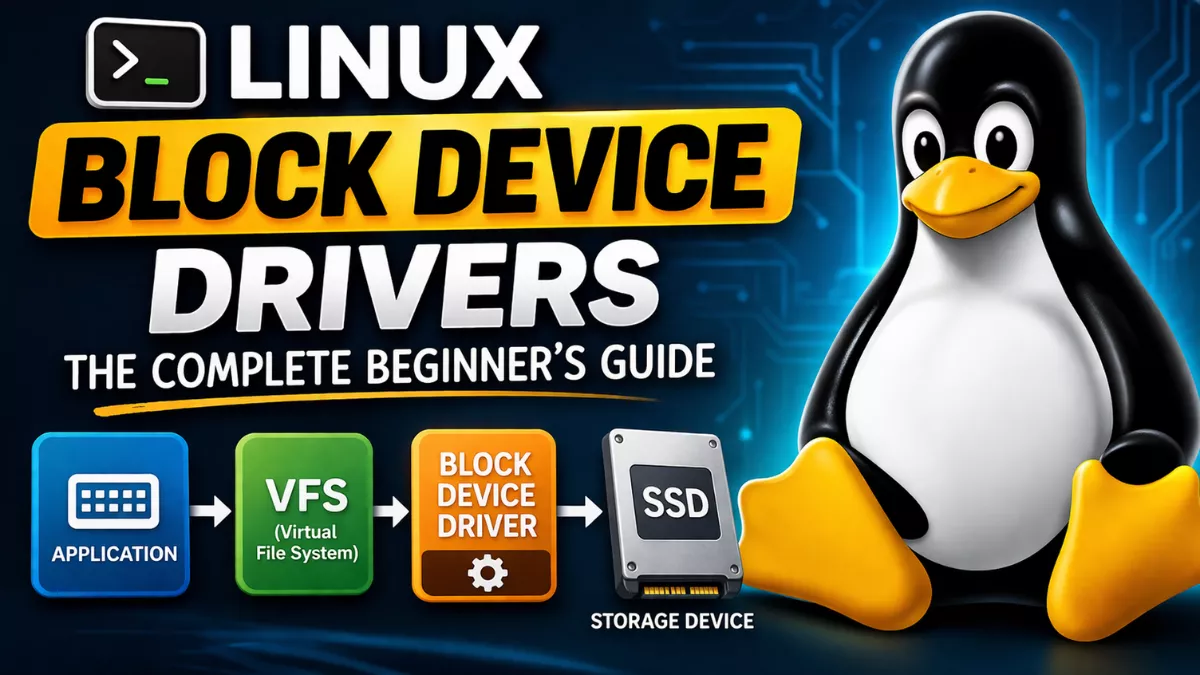

Learn Linux block device drivers from scratch how they work, how to write one, request queues, bio structures, blk-mq, and real kernel code examples. The most complete beginner guide

you’ve ever wondered how your Linux system actually talks to a hard disk, an SSD, or even a RAM-based virtual disk block device drivers are the answer. They sit right in the middle, between the kernel’s file system layer and the raw hardware, quietly doing one of the most critical jobs in the entire OS.

This guide is for people who already know C, have some basic Linux kernel awareness, and want to actually understand block device drivers not just skim a Wikipedia summary. We’re going to go deep. We’ll look at the architecture, write real code, understand request queues, dissect the bio structure, and by the end you’ll have a working skeleton driver you can actually compile and test.

No fluff. No corporate jargon. Just kernel internals explained like we’re sitting at a coffee shop with a whiteboard between us

1. What Is a Block Device in Linux?

A block device is any storage device that Linux treats as a collection of fixed-size blocks typically 512 bytes or 4096 bytes each. You can read or write any individual block independently, in any order. That’s the defining characteristic.

Your /dev/sda, /dev/nvme0n1, /dev/mmcblk0 these are all block devices. Even /dev/loop0 (a loopback device backed by a file) is a block device. The kernel doesn’t care if the actual storage is a spinning HDD, an NVMe SSD, an SD card, or a chunk of RAM. As long as the driver exposes the right interface, the kernel’s VFS and file system layers talk to it identically.

The kernel represents block devices under /dev/ and exposes them with major and minor numbers. The major number identifies the driver, and the minor number identifies the specific device or partition that driver manages.

$ ls -l /dev/sda*

brw-rw---- 1 root disk 8, 0 Mar 30 09:15 /dev/sda

brw-rw---- 1 root disk 8, 1 Mar 30 09:15 /dev/sda1

brw-rw---- 1 root disk 8, 2 Mar 30 09:15 /dev/sda2The b at the start means block device. Major number 8 belongs to the sd driver (SCSI disk). Minor 0 is the whole disk; 1, 2 are partitions.

2. Block Devices vs Character Devices : What’s the Real Difference?

People learning Linux device drivers always hit this question early. Here’s the honest answer:

Character devices move data as a stream, byte by byte, sequentially. Think serial ports, keyboards, audio devices. You can’t seek to byte 4000 on a keyboard that makes no sense. The kernel doesn’t buffer data for character devices in any sophisticated way; what you write is what gets sent.

Block devices are random-access, block-addressable storage. The kernel’s block layer sits between the file system and the driver. It buffers I/O requests, reorders them for efficiency (that’s the I/O scheduler), merges adjacent requests, and handles caching through the page cache. The driver never talks directly to a file system it talks to the block layer, which handles all that complexity above it.

This distinction matters a lot when you’re writing a driver. A character driver implements file_operations like read() and write(). A block device driver implements a request handler (or a make_request_fn) and registers a gendisk structure. The kernel does the rest of the heavy lifting.

Another practical difference: block devices support partitioning. You can take /dev/sda and carve it into /dev/sda1, /dev/sda2. The partition table is parsed by the kernel when you register the disk. Character devices don’t have this concept.

3. The Linux Kernel I/O Stack : The Big Picture

Before you write a single line of driver code, you need this mental model. The Linux I/O stack has several layers, and knowing where your driver sits changes everything about how you write it.

From top to bottom:

- User Space : your application calls

read()orwrite()on a file - VFS (Virtual File System) : the generic file system interface. Routes calls to the correct file system (ext4, xfs, btrfs, etc.)

- Page Cache : kernel-managed RAM buffer. If data is already cached, it never even hits the driver

- File System Layer : ext4, xfs, etc. convert file offsets to block addresses

- Block Layer : this is where your driver interfaces. It takes block I/O requests, schedules them, merges them, and dispatches to the driver

- Block Device Driver : your code. Translates kernel requests into hardware commands

- Hardware : the actual disk controller, SSD, or RAM

Your block device driver lives at layer 6. It never sees file names, file offsets, or inodes. All it gets are sector numbers and memory buffers. “Read 8 sectors starting at sector 1024 into this memory address.” That’s the level of abstraction you work at.

This is why writing block drivers is actually simpler in some ways than file systems you don’t deal with the complexity of the FS. But it’s harder in other ways because you deal directly with kernel memory management, DMA, and hardware timing.

4. Block Layer Internals : How I/O Requests Actually Flow

The block layer is one of the most sophisticated pieces of the Linux kernel. Here’s what happens between “user calls read()” and “driver gets the request”.

Step 1: The File System Submits a bio

When a file system needs to read or write blocks, it creates a bio (Block I/O) structure and submits it to the block layer using submit_bio(). The bio contains the target block device, the starting sector, the data buffer (as a list of memory pages), and the direction (read or write).

Step 2: The Block Layer Processes the bio

The block layer does several things with incoming bios:

- Merging: If two requests target adjacent sectors, merge them into one. Fewer requests = better throughput, especially on spinning disks.

- Sorting: Reorder requests to minimize seek time on HDDs (the classic “elevator algorithm”).

- Batching: Group requests and dispatch them together to reduce per-request overhead.

Step 3: Driver Receives and Processes the Request

The driver’s request function gets called. It reads the sector address and data buffer from the request, does the actual I/O (memory copy for RAM disks, DMA transfer for real hardware), and signals completion by calling blk_mq_end_request().

The block layer then updates the page cache, wakes up any waiting processes, and the user-space read() call finally returns.

5. The bio Structure : The Kernel’s Fundamental I/O Unit

If you want to write block device drivers, you need to understand struct bio cold. It’s defined in include/linux/blk_types.h and it’s the main data structure that flows through the entire block layer.

struct bio {

struct bio *bi_next; /* request queue link */

struct block_device *bi_bdev; /* target block device */

unsigned int bi_opf; /* operation and flags */

blk_status_t bi_status; /* error status */

struct bvec_iter bi_iter; /* current position */

bio_end_io_t *bi_end_io; /* completion callback */

void *bi_private; /* driver private data */

unsigned short bi_vcnt; /* number of bio_vecs */

struct bio_vec bi_io_vec[0]; /* the actual data vectors */

};

The key parts:

bi_iter : Where You Are in the I/O

struct bvec_iter {

sector_t bi_sector; /* device sector (512-byte units) */

unsigned int bi_size; /* remaining bytes */

unsigned int bi_idx; /* current bio_vec index */

unsigned int bi_bvec_done; /* bytes done in current bvec */

};

bi_iter.bi_sector tells you which sector on disk this I/O starts at. Always work with this, never with raw byte offsets.

bio_vec : The Scatter-Gather List

A bio doesn’t always point to a single contiguous memory buffer. It uses a scatter-gather list of bio_vec structures, each pointing to a page of memory plus an offset and length within that page.

struct bio_vec {

struct page *bv_page; /* pointer to the page */

unsigned int bv_len; /* length of this segment */

unsigned int bv_offset; /* offset within the page */

};

To iterate over all segments in a bio, use the bio_for_each_segment macro:

struct bio_vec bvec;

struct bvec_iter iter;

bio_for_each_segment(bvec, bio, iter) {

void *kaddr = kmap_atomic(bvec.bv_page);

/* do your I/O with kaddr + bvec.bv_offset, length bvec.bv_len */

kunmap_atomic(kaddr);

}

bi_opf : Operation Flags

This field tells you what kind of I/O to do. Use bio_data_dir(bio) to get the direction: READ or WRITE. For writes, you read data from the bio’s pages and write to storage. For reads, you write data into the bio’s pages from storage.

Never try to directly check bi_opf for direction always use the helper macro. The flags field has multiple bits packed together and you’ll get it wrong if you poke at it directly.

6. Request Queues and the I/O Scheduler

Every block device in Linux has an associated request queue struct request_queue. This is the object that sits between the block layer and your driver. It holds pending I/O requests, scheduler configuration, queue limits, and a reference to your driver’s request-handling function.

Queue Limits : Telling the Kernel What Your Device Can Do

One of the most important things you set up when registering a block driver is the queue limits. These tell the block layer what your hardware can and can’t handle, so it can merge and split requests appropriately.

blk_queue_max_hw_sectors(queue, max_sectors);

blk_queue_max_segments(queue, max_segments);

blk_queue_logical_block_size(queue, 512);

blk_queue_physical_block_size(queue, 4096);

If you say your device supports max 256 sectors per request, the block layer will never send you a request larger than that. If you support scatter-gather with up to 128 segments, the layer respects that too. Getting these wrong leads to subtle corruption or panics, so set them carefully.

I/O Schedulers

The I/O scheduler (also called the block scheduler) decides in what order to dispatch requests from the queue to the driver. Linux has had several over the years:

- CFQ (Completely Fair Queuing) : removed in kernel 5.0, was the default for years

- Deadline : prioritizes requests by deadline, good for SSDs and databases

- BFQ (Budget Fair Queuing) : interactive workload friendly, groups processes

- mq-deadline : the multi-queue version of Deadline, common default today

- none : no reordering, FIFO, used for NVMe and fast storage

You can check and change the scheduler at runtime:

$ cat /sys/block/sda/queue/scheduler

mq-deadline [kyber] bfq none

$ echo none > /sys/block/nvme0n1/queue/schedulerFor simple drivers (RAM disks, virtual devices), you usually use BLK_MQ_F_SHOULD_MERGE flags and let the block layer handle scheduling without worrying about it yourself.

7. Writing Your First Linux Block Device Driver

Enough theory. Let’s write a simple RAM-backed block device driver essentially a software-defined disk that lives in kernel memory. This is the classic learning exercise for block driver development because it has no hardware complexity, just the pure kernel interfaces.

Our driver will:

- Register a block device with a 16MB RAM disk

- Handle read and write requests

- Be loadable as a kernel module

- Be accessible as

/dev/myblk

Header Includes

#include <linux/module.h>

#include <linux/kernel.h>

#include <linux/fs.h>

#include <linux/blkdev.h>

#include <linux/blk-mq.h>

#include <linux/genhd.h>

#include <linux/hdreg.h>

#include <linux/vmalloc.h>

MODULE_LICENSE("GPL");

MODULE_AUTHOR("Your Name");

MODULE_DESCRIPTION("Simple RAM Block Device Driver");

Device State Structure

#define MY_BLOCK_MAJOR 240

#define MY_BLOCK_MINORS 1

#define MY_BLOCK_NAME "myblk"

#define KERNEL_SECTOR_SIZE 512

#define MY_DISK_SIZE_MB 16

static int major_num = MY_BLOCK_MAJOR;

static int disk_size_mb = MY_DISK_SIZE_MB;

struct my_block_dev {

unsigned long size; /* size in bytes */

u8 *data; /* the RAM storage */

struct blk_mq_tag_set tag_set;

struct request_queue *queue;

struct gendisk *gd;

};

static struct my_block_dev *dev;

The Request Handler

This is the heart of any block driver. The kernel calls this function when it has I/O work for you to do. In blk-mq style (modern kernel), each request is a struct request that wraps one or more bio structures.

static blk_status_t my_block_request(struct blk_mq_hw_ctx *hctx,

const struct blk_mq_queue_data *bd)

{

struct request *req = bd->rq;

struct my_block_dev *bdev = req->q->queuedata;

struct bio_vec bvec;

struct req_iterator iter;

loff_t pos = blk_rq_pos(req) * KERNEL_SECTOR_SIZE;

loff_t dev_size = bdev->size;

blk_mq_start_request(req);

if (blk_rq_is_passthrough(req)) {

pr_notice("Skip non-fs request\n");

blk_mq_end_request(req, BLK_STS_IOERR);

return BLK_STS_OK;

}

rq_for_each_segment(bvec, req, iter) {

size_t len = bvec.bv_len;

void *buf = page_address(bvec.bv_page) + bvec.bv_offset;

if (pos + len > dev_size) {

pr_err("Request beyond device size\n");

blk_mq_end_request(req, BLK_STS_IOERR);

return BLK_STS_OK;

}

if (rq_data_dir(req) == WRITE)

memcpy(bdev->data + pos, buf, len);

else

memcpy(buf, bdev->data + pos, len);

pos += len;

}

blk_mq_end_request(req, BLK_STS_OK);

return BLK_STS_OK;

}

static const struct blk_mq_ops my_mq_ops = {

.queue_rq = my_block_request,

};

Notice: rq_data_dir(req) returns WRITE or READ. For writes, copy from the bio’s pages into our RAM buffer. For reads, copy from the RAM buffer into the bio’s pages. That’s the entire I/O operation for a RAM disk just memcpy.

8. The gendisk Structure and Disk Registration

The struct gendisk is how you tell the kernel “here is a disk.” It holds the device name, capacity, major/minor numbers, and function pointers for partition-related operations. You allocate it with alloc_disk() and register it with add_disk().

static const struct block_device_operations my_fops = {

.owner = THIS_MODULE,

};

static int __init my_block_init(void)

{

int ret;

/* Allocate device state */

dev = kzalloc(sizeof(*dev), GFP_KERNEL);

if (!dev)

return -ENOMEM;

dev->size = disk_size_mb * 1024 * 1024;

dev->data = vmalloc(dev->size);

if (!dev->data) {

ret = -ENOMEM;

goto err_free_dev;

}

memset(dev->data, 0, dev->size);

/* Set up the tag set for blk-mq */

dev->tag_set.ops = &my_mq_ops;

dev->tag_set.nr_hw_queues = 1;

dev->tag_set.queue_depth = 128;

dev->tag_set.numa_node = NUMA_NO_NODE;

dev->tag_set.cmd_size = 0;

dev->tag_set.flags = BLK_MQ_F_SHOULD_MERGE;

ret = blk_mq_alloc_tag_set(&dev->tag_set);

if (ret)

goto err_free_data;

/* Create the request queue */

dev->queue = blk_mq_init_queue(&dev->tag_set);

if (IS_ERR(dev->queue)) {

ret = PTR_ERR(dev->queue);

goto err_free_tag_set;

}

dev->queue->queuedata = dev;

/* Allocate the gendisk */

dev->gd = alloc_disk(MY_BLOCK_MINORS);

if (!dev->gd) {

ret = -ENOMEM;

goto err_free_queue;

}

dev->gd->major = major_num;

dev->gd->first_minor = 0;

dev->gd->fops = &my_fops;

dev->gd->queue = dev->queue;

dev->gd->private_data = dev;

snprintf(dev->gd->disk_name, 32, MY_BLOCK_NAME);

/* Set capacity in 512-byte sectors */

set_capacity(dev->gd, dev->size / KERNEL_SECTOR_SIZE);

/* Register the major number */

ret = register_blkdev(major_num, MY_BLOCK_NAME);

if (ret < 0)

goto err_free_gd;

/* Add the disk to the system */

add_disk(dev->gd);

pr_info("myblk: registered with %d MB RAM disk\n", disk_size_mb);

return 0;

err_free_gd:

put_disk(dev->gd);

err_free_queue:

blk_cleanup_queue(dev->queue);

err_free_tag_set:

blk_mq_free_tag_set(&dev->tag_set);

err_free_data:

vfree(dev->data);

err_free_dev:

kfree(dev);

return ret;

}

The Cleanup Function

static void __exit my_block_exit(void)

{

del_gendisk(dev->gd);

put_disk(dev->gd);

blk_cleanup_queue(dev->queue);

blk_mq_free_tag_set(&dev->tag_set);

unregister_blkdev(major_num, MY_BLOCK_NAME);

vfree(dev->data);

kfree(dev);

pr_info("myblk: unregistered\n");

}

module_init(my_block_init);

module_exit(my_block_exit);

Order matters in cleanup you always reverse the registration order. del_gendisk before unregister_blkdev. Free memory last. A single wrong order here causes a kernel panic on module unload.

9. The make_request Approach : Bypassing the Request Queue

There are two main styles of block driver in Linux. The request-queue style (what we’ve been building with blk-mq) buffers requests, lets the scheduler sort them, and dispatches them to your handler. This is good for real hardware where reordering matters.

The other style uses blk_queue_make_request() to register a custom function that bypasses the scheduler entirely. Every bio comes straight to your function with no buffering. This is ideal for:

- RAM disks (seek time is zero, no benefit to reordering)

- Stacked drivers (like dm-crypt, RAID) that pass I/O through to other devices

- Virtual devices where latency matters more than throughput optimization

static blk_qc_t my_make_request(struct request_queue *q, struct bio *bio)

{

struct my_block_dev *bdev = q->queuedata;

struct bio_vec bvec;

struct bvec_iter iter;

loff_t pos = bio->bi_iter.bi_sector * KERNEL_SECTOR_SIZE;

bio_for_each_segment(bvec, bio, iter) {

void *buf = kmap_atomic(bvec.bv_page) + bvec.bv_offset;

size_t len = bvec.bv_len;

if (bio_data_dir(bio) == WRITE)

memcpy(bdev->data + pos, buf, len);

else

memcpy(buf, bdev->data + pos, len);

kunmap_atomic(buf - bvec.bv_offset);

pos += len;

}

bio_endio(bio);

return BLK_QC_T_NONE;

}

/* During init, instead of blk_mq_init_queue: */

dev->queue = blk_alloc_queue(GFP_KERNEL);

blk_queue_make_request(dev->queue, my_make_request);

Note the use of kmap_atomic() this temporarily maps a high-memory page into the kernel’s virtual address space so you can access it. Always pair with kunmap_atomic(). Forgetting this causes silent data corruption on 32-bit systems with more than 1GB RAM, or just panics outright.

10. Multi-Queue Block Layer (blk-mq) : The Modern Way

Before kernel 3.13, Linux had a single request queue per device. All requests serialized through one lock. This was fine when a disk could do maybe 100-200 IOPS. Modern NVMe SSDs do millions of IOPS. A single queue becomes a catastrophic bottleneck.

The multi-queue block layer (blk-mq) solved this. It gives each CPU (or NUMA node) its own software queue, and maps those to multiple hardware queues if the device supports it. NVMe SSDs commonly have 32+ hardware queues. blk-mq can feed all of them in parallel with zero lock contention between CPUs.

The architecture looks like this:

- Software Queues : one per CPU, handles submissions from that CPU without locking others

- Hardware Queues : one per device queue (NVMe namespace/queue), your driver processes these

- Mapping : blk-mq maps many software queues to fewer (or more) hardware queues automatically

When you call blk_mq_alloc_tag_set(), you configure this mapping:

dev->tag_set.nr_hw_queues = num_online_cpus(); /* one HW queue per CPU */

dev->tag_set.queue_depth = 256; /* max outstanding requests per queue */

For a RAM disk or simple driver, nr_hw_queues = 1 is fine. For a real NVMe driver, you’d query the hardware for the actual number of queues it supports and set this accordingly.

Tags and Request Tracking

blk-mq uses a tag system to track in-flight requests. Each request gets a tag (an integer ID) when it’s submitted, and you use that tag to identify and complete it later. This is crucial for asynchronous I/O where you submit a request to hardware and get an interrupt later when it’s done.

/* In your queue_rq handler: */

blk_mq_start_request(req); /* mark it as started, tag is active */

/* ... submit to hardware, store req somewhere indexed by tag ... */

/* In your interrupt handler or completion poll: */

blk_mq_end_request(req, BLK_STS_OK); /* or BLK_STS_IOERR on error */

11. Handling Partitions in Block Drivers

When you register a disk with add_disk(), the kernel automatically reads the partition table from the device’s first sectors (or GPT header) and creates the partition device nodes for you. You don’t manually create /dev/myblk1 — the kernel does it.

The number of minors you allocate when calling alloc_disk() controls how many partitions your device can have. If you call alloc_disk(16), you get minor numbers 0 through 15, meaning 15 possible partitions (minor 0 is the whole disk).

For a partition, the block layer translates the sector offset automatically. If partition 1 starts at sector 2048, and a request comes in for sector 0 of partition 1, your driver sees sector 2048. The translation is completely transparent. This is one of the great things about the kernel’s partition handling — your driver doesn’t need to know anything about partitions.

To force the kernel to re-read a partition table after you’ve modified it (like after writing a new MBR), use:

ioctl(fd, BLKRRPART, 0);

/* or from command line: */

/* blockdev --rereadpt /dev/myblk */12. Compiling, Loading, and Testing Your Driver

The Makefile

obj-m += myblk.o

KDIR ?= /lib/modules/$(shell uname -r)/build

all:

make -C $(KDIR) M=$(PWD) modules

clean:

make -C $(KDIR) M=$(PWD) clean

$ make

$ sudo insmod myblk.ko

$ dmesg | tail -5

[12345.678] myblk: registered with 16 MB RAM disk

$ ls /dev/myblk

/dev/myblk

Creating a File System and Mounting

$ sudo mkfs.ext4 /dev/myblk

$ sudo mkdir /mnt/myblk

$ sudo mount /dev/myblk /mnt/myblk

$ df -h /mnt/myblk

Filesystem Size Used Avail Use% Mounted on

/dev/myblk 15M 152K 14M 2% /mnt/myblk

$ echo "hello kernel world" | sudo tee /mnt/myblk/test.txt

$ cat /mnt/myblk/test.txt

hello kernel worldIf that works congratulations. You have a working Linux block device driver. The kernel’s ext4 file system is writing to and reading from your driver’s RAM buffer through the full kernel I/O stack.

Stress Testing with fio

$ sudo fio --name=test --filename=/dev/myblk --rw=randrw \

--bs=4k --size=8M --numjobs=4 --time_based --runtime=10 \

--group_reportingThis hits your driver with random 4KB reads and writes from 4 parallel threads. Watch for kernel panics, data corruption, or lockups. If it runs clean for 30 seconds, your locking and memory handling are probably okay.

Checking /proc and /sys

$ cat /proc/devices | grep myblk

240 myblk

$ ls /sys/block/myblk/

alignment_offset capability dev holders inflight power

queue range removable ro size slaves stat subsystem uevent

$ cat /sys/block/myblk/size

32768 # in 512-byte sectors = 16MB

$ cat /sys/block/myblk/queue/logical_block_size

51213. Real-World Block Driver Examples in the Kernel

The best way to get better at block driver development after writing your own toy driver is to read real drivers in the kernel tree. Here are the ones worth studying, in order of increasing complexity:

drivers/block/brd.c — RAM Disk Driver

This is the official kernel RAM disk driver (/dev/ram0, etc.). It’s very similar to what we built, but handles multiple devices, proper memory management with radix trees, and the make_request style. Read this first. About 400 lines, very approachable.

drivers/block/loop.c — Loopback Driver

This backs a block device with a regular file. When you do losetup /dev/loop0 disk.img, this driver reads/writes to disk.img in userspace. It uses make_request style and shows how to do asynchronous I/O with kernel threads. Medium complexity.

drivers/block/null_blk/ — Null Block Driver

This is a high-performance benchmarking driver that discards all writes and returns zeroes on reads. It’s used to benchmark the block layer overhead itself. It supports blk-mq with multiple queues, optional latency injection, and configurable parameters via sysfs. This is a goldmine for learning advanced blk-mq patterns.

drivers/nvme/host/ — NVMe Driver

The real NVMe driver is one of the most sophisticated block drivers in the kernel. Multiple hardware queues, PCI DMA, interrupt-based completion, NVMe command sets — it’s all there. Read this only after you’re comfortable with the basics. But when you are, it shows you exactly how production-quality block drivers handle real hardware.

drivers/md/ — Software RAID and dm

The MD (Multiple Devices) layer and Device Mapper (dm) are stacked block drivers — they sit on top of other block devices and provide RAID, encryption (dm-crypt), LVM, thin provisioning, etc. These are fascinating because they show how to stack block devices and how the kernel handles the same bio being processed by multiple drivers in sequence.

14. Debugging Linux Block Device Drivers

Kernel debugging is a whole different world from userspace debugging. You can’t attach GDB and step through kernel code easily (well, you can with KGDB, but it’s painful). Here are the practical techniques that actually work.

pr_info, pr_err, printk

Your first tool is always printk-based logging. Use pr_info(), pr_warn(), pr_err() — these prepend your module name automatically if you define pr_fmt at the top of your file:

#define pr_fmt(fmt) KBUILD_MODNAME ": " fmtThen all your pr_info() calls show up in dmesg as myblk: your message here. Check dmesg -w in a terminal while loading and exercising your module.

QEMU + GDB for Real Debugging

The real way to debug kernel drivers without destroying your actual system is to run Linux in QEMU and attach GDB to it. QEMU supports -s -S flags that start it with a GDB stub paused at the first instruction.

$ qemu-system-x86_64 -kernel bzImage -initrd initrd.img \

-append "console=ttyS0" -nographic -s -S

# In another terminal:

$ gdb vmlinux

(gdb) target remote :1234

(gdb) break my_block_request

(gdb) continueThis lets you set breakpoints in your driver code, inspect kernel data structures, and single-step through the request handler. It’s the closest thing to real debugging you’ll get in the kernel world.

CONFIG_DEBUG_BLOCK_EXT_DEVT and Block-Specific Debugging

The kernel has several Kconfig options specifically for block layer debugging:

CONFIG_BLK_DEBUG_FS— exposes detailed blk-mq debugging info under/sys/kernel/debug/block/CONFIG_BLK_DEV_IO_TRACE— enables blktrace, a powerful tool for recording every I/O eventCONFIG_FAIL_MAKE_REQUEST— fault injection for block requests

blktrace and blkparse

blktrace is a dedicated block I/O tracing tool. It hooks into the kernel’s block trace points and records every event — bio submission, queue insertion, driver dispatch, completion — with nanosecond timestamps.

$ sudo blktrace -d /dev/myblk -o trace

$ sudo blkparse trace.blktrace.0 | head -50This output shows you exactly what the block layer is doing with your device. Great for diagnosing scheduler behavior, finding unexpected I/O patterns, and verifying that your driver is completing requests correctly.

15. Common Mistakes Beginners Make With Block Device Drivers

These mistakes have caused panics for probably every kernel developer who’s gone through this learning curve. Learn from them instead of experiencing them firsthand.

Mistake 1: Not Calling blk_mq_start_request

In blk-mq, you must call blk_mq_start_request(req) before doing any processing. Skipping this causes the timeout mechanism to fire incorrectly and you’ll see mysterious “request timeout” errors or double-completions that panic the kernel.

Mistake 2: Wrong Sector Arithmetic

Sectors are always 512 bytes in the block layer’s address space, even if your device uses 4096-byte physical blocks. blk_rq_pos(req) returns the offset in 512-byte units. Always multiply by KERNEL_SECTOR_SIZE (512) to get bytes. Getting this wrong causes reads to return garbage and writes to corrupt data in non-obvious ways.

Mistake 3: Sleeping in Atomic Context

Your request handler might be called in an atomic context (interrupts disabled, spinlock held). Calling anything that can sleep — kmalloc(GFP_KERNEL), mutex_lock(), copy_to_user() — in this context causes a lockdep warning or a kernel crash. Always use GFP_ATOMIC for allocations in the request path, and do all blocking work in a separate kernel thread or workqueue.

Mistake 4: Not Handling Bio Completion Correctly

Every bio that comes to your driver must be completed — either with bio_endio(bio) (success), bio_io_error(bio) (error), or through request-level completion via blk_mq_end_request(). Forgetting to complete a bio hangs the process that submitted it forever. It won’t crash the kernel immediately — it’ll just hang, which is often harder to debug than a crash.

Mistake 5: Cleanup Order Errors

The exit path cleanup order is the reverse of the init path setup order. This sounds obvious but it’s easy to get wrong when you’re debugging and adding/removing init steps. A wrong cleanup order causes use-after-free bugs, double-free crashes, or hung processes when you do rmmod.

Mistake 6: Ignoring Request Flush and FUA

If your device claims to have a write cache (and some real hardware does), you need to handle flush requests. When an application calls fsync(), the file system submits a special REQ_PREFLUSH or REQ_FUA (Force Unit Access) request to make sure data is actually on stable storage. Ignoring these means fsync() appears to succeed but data could be lost on power failure. For a simple RAM disk driver this doesn’t matter, but for any driver that interfaces with real hardware with write caches, this is critical for data integrity.

16. What to Learn Next

You’ve got the fundamentals of Linux block device driver development down. Here’s where to go from here depending on your goals:

If You Want to Work on Real Storage Hardware

Learn DMA (Direct Memory Access). Real drivers don’t use memcpy — they program the hardware’s DMA controller to transfer data directly between device and RAM without CPU involvement. Study the kernel’s DMA API: dma_alloc_coherent(), dma_map_sg(), dma_sync_for_device(). The NVMe driver is the best example of DMA in block drivers.

If You’re Interested in Storage Stacking (LVM, RAID, Encryption)

Learn the Device Mapper framework (drivers/md/dm.c). Device mapper lets you build complex storage topologies by stacking block devices. dm-crypt (full disk encryption), dm-thin (thin provisioning), and LVM all use it. Writing a simple dm target is a great exercise — you implement a transform function that maps bio addresses from the virtual device to the underlying real device.

If You’re Targeting Embedded or Automotive Systems

Look at UFS (Universal Flash Storage) and eMMC drivers in drivers/scsi/ufs/ and drivers/mmc/. These are the storage interfaces on embedded SoCs, phones, and automotive infotainment systems. The MMC subsystem has its own host controller abstraction on top of the block layer, and understanding it opens up a huge class of embedded storage work.

Resources Worth Reading

- Linux Device Drivers, 3rd Edition (Corbet, Rubini, Kroah-Hartman) : free online, chapter 16 is specifically on block drivers. Note: it’s old (2.6 era), but the concepts are solid even if the specific APIs changed.

- The kernel source itself under

Documentation/block/: especiallyblk-mq.rstandbiodoc.rst - Jonathan Corbet’s LWN.net articles on the block layer : search “LWN block layer” and you’ll find years of excellent coverage of how the block layer evolved

- The

null_blkdriver source for advanced blk-mq patterns

Quick Reference: Block Driver API Cheat Sheet

| Function / Macro | Purpose |

|---|---|

register_blkdev(major, name) | Register a major number for your driver |

unregister_blkdev(major, name) | Unregister on module exit |

alloc_disk(minors) | Allocate a gendisk structure |

add_disk(gd) | Make disk visible to the kernel |

del_gendisk(gd) | Remove disk from the kernel |

put_disk(gd) | Release gendisk reference |

set_capacity(gd, sectors) | Set disk size in 512-byte sectors |

blk_mq_alloc_tag_set(ts) | Allocate blk-mq tag set |

blk_mq_init_queue(ts) | Create a request queue |

blk_cleanup_queue(q) | Destroy a request queue |

blk_mq_start_request(req) | Mark request as in-flight |

blk_mq_end_request(req, status) | Complete a request |

blk_rq_pos(req) | Get starting sector of a request |

rq_data_dir(req) | Get direction: READ or WRITE |

rq_for_each_segment(bvec, req, iter) | Iterate over request segments |

bio_data_dir(bio) | Get bio direction: READ or WRITE |

bio_for_each_segment(bvec, bio, iter) | Iterate over bio segments |

bio_endio(bio) | Complete a bio (success) |

submit_bio(bio) | Submit a bio to block layer |

kmap_atomic(page) | Map a page into kernel virtual space |

kunmap_atomic(addr) | Unmap after use |

Wrapping Up

Block device drivers are where kernel development gets really interesting. You’re operating at the exact boundary between software and hardware — translating abstract kernel requests into concrete I/O operations. Every filesystem on every Linux system depends on block drivers working correctly, which means this is some of the most impactful kernel code you can write.

The path forward is clear: start with the RAM disk driver we built here, get it compiling and running, then start reading the real kernel drivers. brd.c, then null_blk, then NVMe if you’re aiming for high-performance storage. Each one will teach you something the simpler ones don’t cover.

The kernel community is also genuinely welcoming to new contributors. If you find a bug in a block driver or have a performance improvement, submitting a patch to the linux-block mailing list is a realistic goal — even for someone who’s been doing this for just a few months.

Good luck, and keep reading dmesg.

Have questions about a specific part of block driver development? Drop a comment below happy to go deeper on any of these topics.

Frequently Asked Questions : Linux Block Device Drivers

Q1. What is a block device driver in Linux? A block device driver is a kernel module that allows Linux to communicate with storage hardware like hard disks, SSDs, and SD cards by exposing them as fixed-size, randomly accessible block devices under /dev/.

Q2. What is the difference between a block device and a character device in Linux? Block devices support random access and are buffered through the kernel’s page cache. Character devices stream data sequentially with no buffering. Storage devices use block drivers while serial ports and keyboards use character drivers.

Q3. What is the bio structure in the Linux kernel? bio stands for Block I/O and is the fundamental data structure the kernel uses to represent a single I/O operation. It contains the target device, starting sector, data buffers as scatter-gather page vectors, and a completion callback function.

Q4. What is blk-mq in Linux? blk-mq stands for Multi-Queue Block Layer and is the modern Linux block I/O framework that replaced the old single-queue design. It gives each CPU its own software queue and maps them to multiple hardware queues, enabling millions of IOPS for NVMe SSDs.

Q5. How do I write a simple Linux block device driver? You allocate a gendisk structure, set up a blk-mq tag set and request queue, implement a queue_rq handler that processes read and write requests, register a major number with register_blkdev(), and call add_disk() to make the device visible to the kernel.

Q6. What is gendisk in the Linux kernel? struct gendisk is the kernel structure that represents a physical or virtual disk. It holds the device name, major and minor numbers, capacity in sectors, partition information, and a pointer to the block_device_operations function table.

Q7. What is a request queue in Linux block drivers? A request queue sits between the block layer and your driver. It holds pending I/O requests, manages the I/O scheduler, enforces hardware limits like max sectors and max segments, and dispatches ready requests down to your driver’s handler function.

Q8. What is the difference between make_request and request queue style drivers? Request queue style buffers and schedules I/O before dispatching to your driver which is good for real spinning hardware. make_request style bypasses the scheduler entirely and sends every bio straight to your function which is ideal for RAM disks, virtual devices, and stacked drivers.

Q9. How does Linux handle disk partitions in block drivers? When you call add_disk(), the kernel automatically reads the partition table and creates all partition device nodes for you. The block layer translates partition-relative sector offsets to absolute disk offsets transparently so your driver never needs to handle any partition logic itself.

Q10. What is the I/O scheduler in Linux and how does it affect block drivers? The I/O scheduler reorders and merges block requests to improve throughput and reduce seek time on spinning disks. Common schedulers today include mq-deadline, BFQ, and none. For fast NVMe storage none is usually best. Your driver influences scheduling behavior through queue limits and flags you set during initialization.

Q11. How do I debug a Linux block device driver? Use pr_info and pr_err with dmesg for basic logging. Use blktrace to record every block I/O event with timestamps. Use QEMU combined with GDB for proper breakpoint-level debugging without risking your real system. Enable CONFIG_BLK_DEBUG_FS to expose detailed blk-mq internals under /sys/kernel/debug/block/.

Q12. What are the most common mistakes in block device driver development? The most common mistakes are forgetting to call blk_mq_start_request(), getting sector-to-byte arithmetic wrong, sleeping inside atomic context, failing to complete every bio, using the wrong cleanup order in the module exit function, and ignoring flush and FUA requests on hardware with write caches.

Q13. What kernel source files should I study to learn block driver development? Start with drivers/block/brd.c for the RAM disk reference driver, then move to drivers/block/loop.c for the loopback driver, then drivers/block/null_blk/ for advanced blk-mq patterns, and finally drivers/nvme/host/ when you are ready to see a full DMA-based production driver.

Q14. Can I format and mount a custom block device driver like a real disk? Yes. Once your driver is loaded and the device node appears under /dev/, you can run mkfs.ext4 on it, mount it to any directory, and read and write files normally through it. The kernel file system layer is completely unaware it is talking to a custom driver.

Q15. What should I learn after mastering Linux block device drivers? The natural next steps are the kernel DMA API for real hardware data transfers, the Device Mapper framework for building stacked drivers like dm-crypt and LVM, and the UFS and eMMC subsystems if you are targeting embedded or automotive storage platforms.

For detailed understanding of Platform Devices and Drivers on Linux, refer to the official Linux documentation on Platform Devices and Drivers .

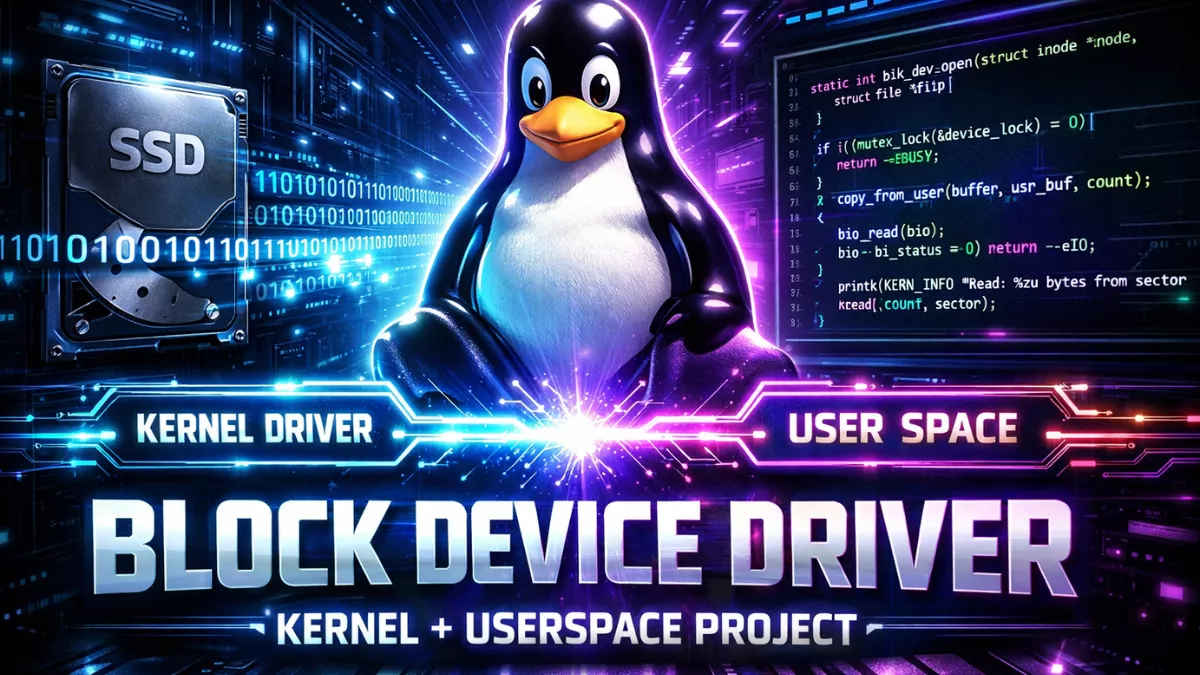

If you’re interested in going beyond theory and building a real-world implementation, check out this Complete Linux Block Device Driver Project that covers both kernel-level driver development and userspace interaction. It walks you step-by-step through creating a functional block driver, handling I/O requests, and building a userspace program to communicate with the kernel module. This is perfect for beginners who want hands-on experience with Linux device drivers.

Mr. Raj Kumar is a highly experienced Technical Content Engineer with 7 years of dedicated expertise in the intricate field of embedded systems. At Embedded Prep, Raj is at the forefront of creating and curating high-quality technical content designed to educate and empower aspiring and seasoned professionals in the embedded domain.

Throughout his career, Raj has honed a unique skill set that bridges the gap between deep technical understanding and effective communication. His work encompasses a wide range of educational materials, including in-depth tutorials, practical guides, course modules, and insightful articles focused on embedded hardware and software solutions. He possesses a strong grasp of embedded architectures, microcontrollers, real-time operating systems (RTOS), firmware development, and various communication protocols relevant to the embedded industry.

Raj is adept at collaborating closely with subject matter experts, engineers, and instructional designers to ensure the accuracy, completeness, and pedagogical effectiveness of the content. His meticulous attention to detail and commitment to clarity are instrumental in transforming complex embedded concepts into easily digestible and engaging learning experiences. At Embedded Prep, he plays a crucial role in building a robust knowledge base that helps learners master the complexities of embedded technologies.